I have been studying ways to use AI with SPUM little by little since early last year.

More precisely, this was a topic I used to assign as a project to students at schools where I lecture or mentor. Unfortunately, compared with the past, there are fewer computer science students who specifically want to go into game development, so even though interest in AI is high, their understanding of Unity is often not. Because of that, the topic had not really advanced very far.

Recently, however, one of the students I mentor has shown a strong interest in game development. They understood the topic I gave them and, although they are approaching it differently from what I originally had in mind, the result has been interesting enough that I decided to quickly build my own prototype as well.

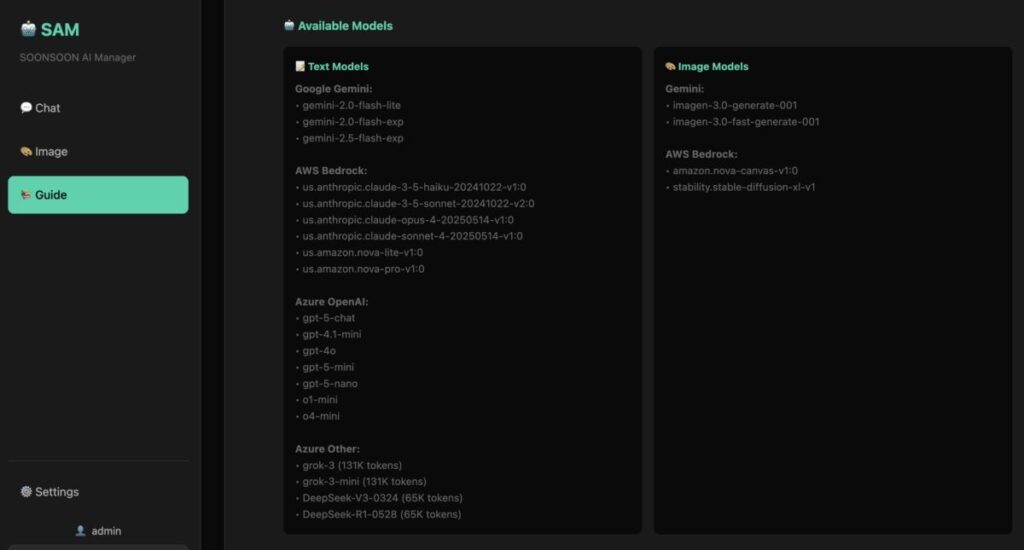

Using the SAM-AI system I have been planning, I listed and connected LLMs that can be accessed through services such as AWS Bedrock, Azure OpenAI, and Gemini.

I have been collecting those pieces one by one since last month to build an AI resource management layer, and now that they are gathered together, there are actually quite a lot of LLMs I can make use of.

Now it is time to connect those models to Unity and start controlling them through natural-language commands.

At the moment, the prototype can only handle a few commands: movement, scouting, and moving all the way to the goal. But now that I have the basic feeling for it, I plan to keep expanding it little by little.

For example, if I issue a command to move to the right, the character moves one tile to the right. If I say something like “move two spaces to the right,” then in theory it should move two tiles. In theory, anyway.

If I give a scouting command, the system checks nearby obstacles, derives around seven tiles that are reachable, and performs a scouting action based on that information. It is basically a scout mode.

Finally, if I issue a command to move to the goal, the LLM calculates what it believes is the optimal route based on the map information and then moves the character to the goal.

Of course, not every model performs well. Faster “flash” style models, for example, sometimes fail to properly understand the system prompt and end up chasing only the literal shortest path.

Now that I have built it, I can already see a number of fun and unexpected behaviors. I will share more about those later. Once I actually built and ran the prototype, I realized the outcome differs from my original expectations in several ways, and it raised quite a few interesting design questions.

I am thinking of eventually polishing this project and releasing it as open source for small teams that want to build AI-powered games, for research purposes, or for use in student classes.

I had originally wanted to do this with students two years ago, but it was difficult to gather and guide students with a strong game-development focus, so I was never able to push it forward properly. It feels like now I may finally be able to do that.

Once the students’ results are far enough along, I plan to organize them and share them as well.

If I am being honest, I would love to build a research lab that keeps exploring and developing systems like this. Since I do not have the right conditions for that yet, maybe I should at least start some kind of online study group.